(Default location: $/db/snapshots)įorget all peers and become sole member of a new cluster. Number of snapshots to retain (default: 5)ĭirectory to save db snapshots. These options can be set in the configuration file: Options Install rke2 v1.20.8+rke2r1 on the first new server node as in the following example: Restore the certs in Step 1 above to the first new server node. See note below for an additional version-specific restore caveat on restore.īack up the following: /var/lib/rancher/rke2/server/cred, /var/lib/rancher/rke2/server/tls, /var/lib/rancher/rke2/server/token, /etc/rancher Warning: For all versions of rke2 v.1.20.9 and prior, you will need to back up and restore certificates first due to a known issue in which bootstrap data might not save on restore (Steps 1 - 3 below assume this scenario). This file is deleted when rke2 starts normally. This file is harmless to leave in place, but must be removed in order to perform subsequent resets or restores. When rke2 resets the cluster, it creates an empty file at /var/lib/rancher/rke2/server/db/reset-flag. Start RKE2 again, and it should run successfully and be restored from the specified snapshot. Result: After a successful restore, a message in the logs says that etcd is running, and RKE2 can be restarted without the flags. To pass the reset flag, first you need to stop RKE2 service if its enabled via systemd: RKE2 enables a feature to reset the cluster to one member cluster by passing -cluster-reset flag, when passing this flag to rke2 server it will reset the cluster with the same data dir in place, the data directory for etcd exists in /var/lib/rancher/rke2/server/db/etcd, this flag can be passed in the events of quorum loss in the cluster. See the full list of etcd-snapshot subcommands at the subcommands page Cluster Reset For example: rke2 etcd-snapshot save -name pre-upgrade-snapshot. You can take a snapshot manually while RKE2 is running with the etcd-snapshot subcommand. If you have multiple etcd or etcd + control-plane nodes, you will have multiple copies of local etcd snapshots. In RKE2, snapshots are stored on each etcd node. To configure the snapshot interval or the number of retained snapshots, refer to the options.

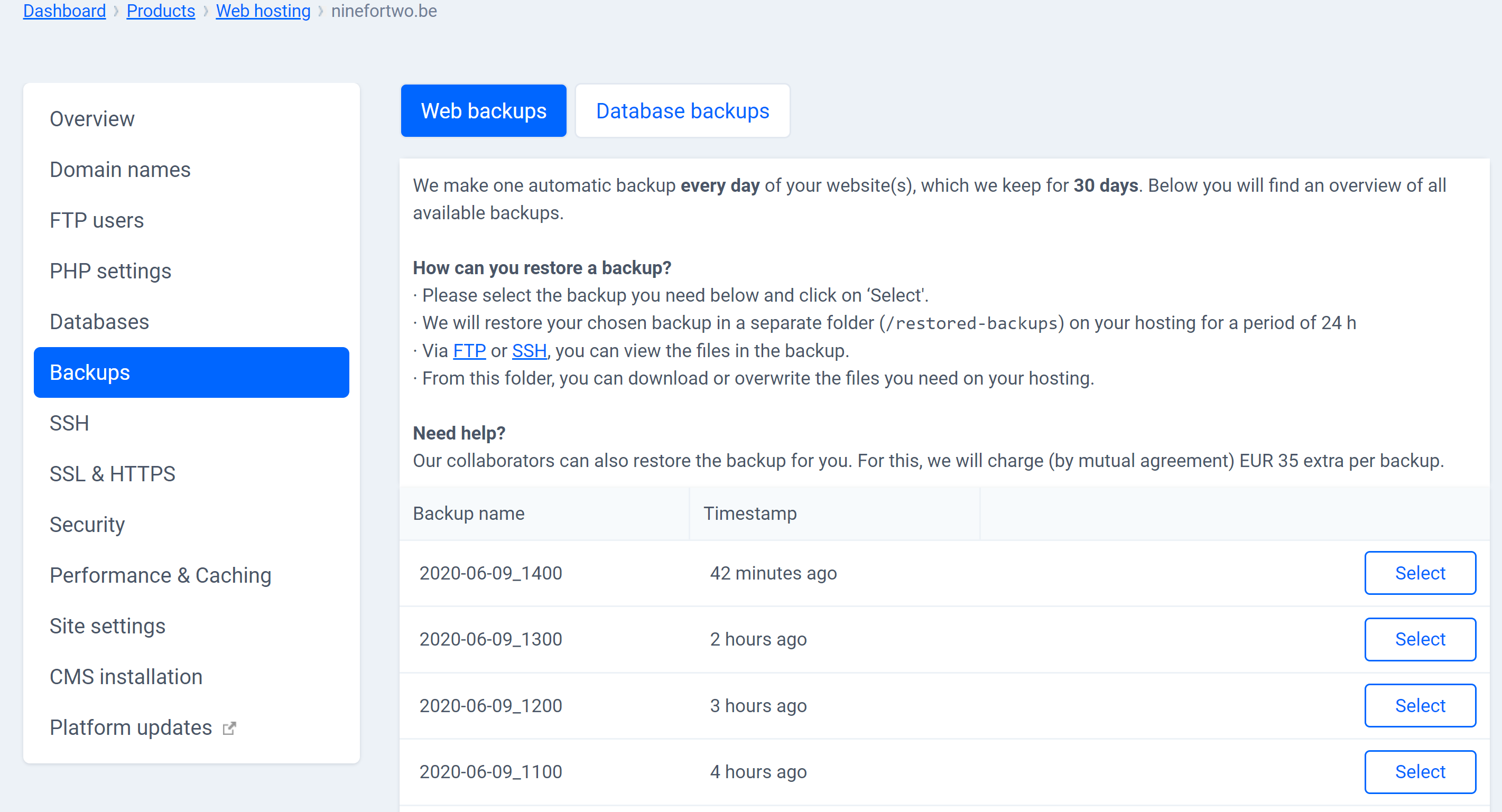

The snapshot directory defaults to /var/lib/rancher/rke2/server/db/snapshots. Note: /var/lib/rancher/rke2 is the default data directory for rke2, it is configurable however via data-dir parameter. In this section, you'll learn how to create backups of the rke2 cluster data and to restore the cluster from backup.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed